Lawyers across the country are finding themselves in trouble for submitting poorly prepared court documents written with the assistance of AI, displaying clear signs of technology’s fast penetration into the legal system.

However, it was inevitable for AI to not only cause clerical errors but also to be used in the submission of «evidence.»

This scenario recently unfolded in a California courtroom during a housing dispute, ending unfavorably for the party that utilized AI.

As reported by NBC News, the plaintiffs in the Mendones v. Cushman & Wakefield, Inc case submitted a peculiar video intended to serve as witness testimony. The video featured a witness whose face was fuzzy and minimally animated. Except for infrequent blinking, her lips were the only part of her face that moved, while the rest of her expression remained static. The video also contained an awkward cut, after which the movements repeated identically.

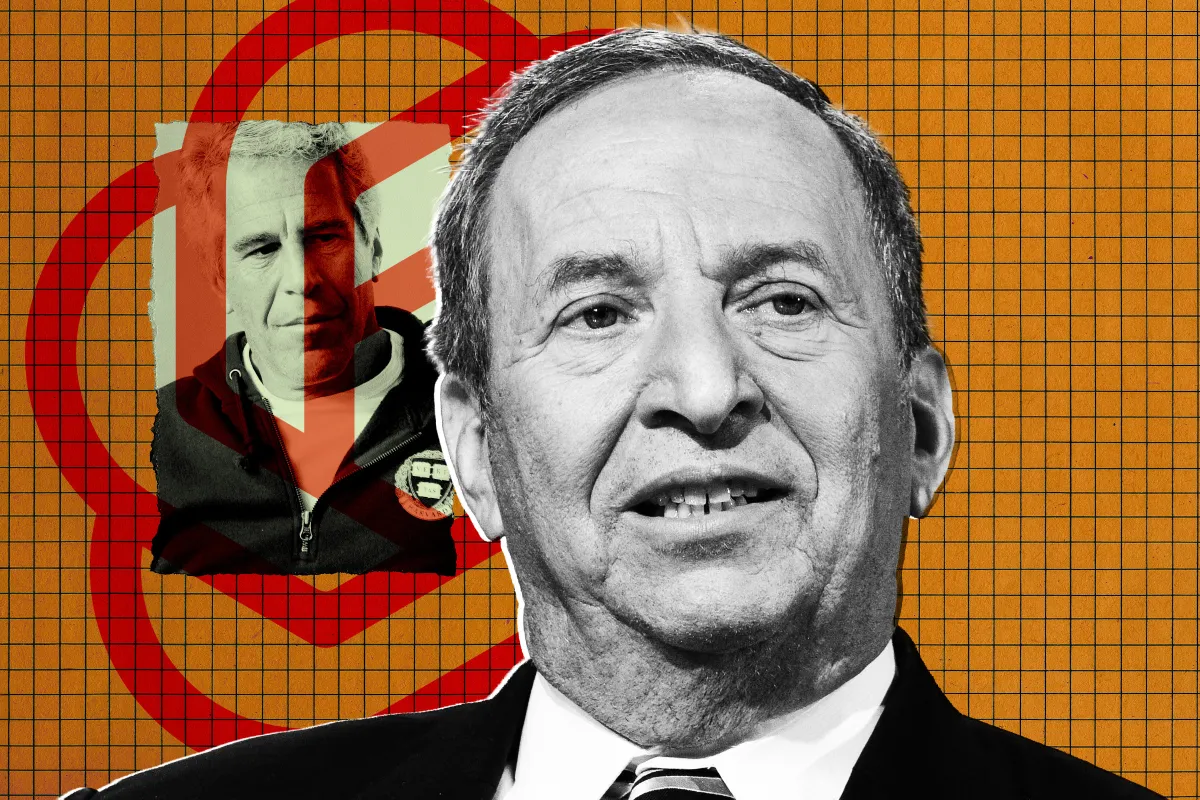

Essentially, it was a clear AI-generated deepfake. According to reports, this may be one of the first documented instances of a deepfake being submitted as purportedly authentic evidence in court—or at least one of the first to be detected.

Judges have shown little tolerance for AI making a joke of their profession, and the judge in this case, Victoria Kolakowski, was no exception. Kolakowski dismissed the case on September 9, citing the AI-generated witness testimony as the reason. The plaintiffs filed a motion for reconsideration, claiming Kolakowski failed to prove that their highly flawed deepfake was produced by AI. Their request was denied on November 6.

Kolakowski mentioned that the legal profession is only beginning to address the challenges posed by AI. The launch of OpenAI’s video generating app, Sora 2, served as an alarm for how effortlessly convincing fake video evidence could be manufactured, with users quickly demonstrating the ability to create realistic videos of people committing crimes, such as shoplifting. Making deepfakes might once have required technical skills, but now, anyone with a smartphone can generate them.

«The judiciary in general is aware that big changes are happening and wants to understand AI, but I don’t think anybody has figured out the full implications,» Kolakowski told NBC. «We’re still dealing with a technology in its infancy.»

Among judges and legal experts interviewed by NBC, there are two main schools of thought on addressing AI. One advocates for preempting the AI threat by revising judicial rules, such as establishing guidelines for lawyers to verify their evidence, or assigning the responsibility of identifying AI forgeries to judges rather than juries. However, another group believes it should be left to judges to sort it out among themselves, to see whether an apocalypse of AI-forged evidence will indeed occur.

Currently, the latter viewpoint is guiding official policy. In May, NBC noted, the US Judicial Conference’s Advisory Committee on Evidence Rules rejected suggestions to revise the guidance on AI, arguing that «existing standards of authenticity are sufficient for regulating AI evidence.»

The committee signaled it was open to making these changes in the future, which could take years. But in the meantime, AI will proliferate in courtrooms, likely unnoticed by most.

«I think AI-generated fake or modified evidence is happening much more frequently than is publicly reported,» judge Erica Yew, a member of California’s Santa Clara County Superior Court, told NBC.

More on AI in law: Judge Criticizes Lawyer Caught Using ChatGPT in Divorce Court, Orders Him to Take Remedial Law Classes

Con información de https://futurism.com/artificial-intelligence/judge-horrified-lawyers-submit-ai-evidence